Tokenization: An ETF-Style Market Structure Revolution

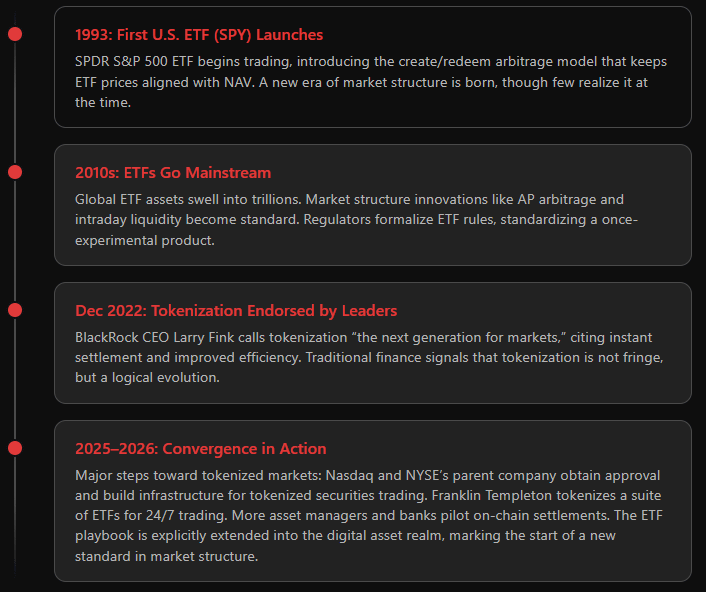

In the 1990s, exchange-traded funds (ETFs) were a novel idea. Many saw them simply as a new wrapper for traditional assets – a convenient repackaging of mutual funds. In reality, ETFs triggered a market structure revolution. By introducing create/redemption mechanisms and arbitrage-driven liquidity, ETFs fundamentally changed how markets functioned and how investors accessed assets.

Today, tokenization of securities is often hyped as a new asset class.

But the more insightful view is that tokenization should be understood as the next iteration of market structure, much like ETFs were, rather than as a new asset category.

To be clear: Tokenization isn’t inventing new economic assets; it’s inventing a more efficient way to issue, trade, and settle the assets we already understand. This is a shift in market plumbing and mechanics, with deep implications for liquidity, pricing, and institutional capital flows.

ETFs as a Market-Structure Innovation

ETFs did not emerge in a vacuum. Their success was rooted in a prior shift in investor behavior. As indexing gained traction, investors increasingly demanded low-cost, diversified, and transparent exposure to markets, rather than expensive active management. The limitation was not the idea, but the implementation: traditional mutual funds were not designed for this at scale. ETFs solved that problem. The mechanisms below explain how.

Creation & Redemption Arbitrage: ETF shares can be created or redeemed in-kind by authorized participants (APs). When the market price of an ETF diverges from the value of its underlying basket, APs arbitrage the difference by buying the cheaper leg and selling the richer one.

This mechanism keeps ETF prices aligned with their underlying assets and prevents persistent premiums or discounts. The result is consistent tracking and pricing discipline enforced not by regulation, but by arbitrage.

Liquidity Concentration: ETFs demonstrated that liquidity can concentrate at the wrapper level. Even when underlying assets are fragmented or less liquid, the ETF itself trades on an exchange with continuous market making.

Over time, ETFs became a primary locus of trading activity. In many cases, liquidity in the ETF exceeded that of its constituents, because arbitrage ensured that positions could be efficiently converted between the wrapper and the underlying.

Structure, not size, became the driver of liquidity.

Investor Access and Cost: By externalizing trading to the exchange, ETFs lowered transaction costs and reduced barriers to entry. Intraday trading, transparent pricing, and single-ticket access to broad exposures improved usability for investors.

These features were only sustainable because the underlying market structure (APs, arbitrage, exchange trading) preserved price efficiency despite the added flexibility.

The takeaway is that ETFs were not just another product, but a new market mechanism. They blurred the line between primary and secondary markets and embedded arbitrage into the system as a stabilizing force.

Crucially, this mechanism succeeded because it aligned with a clear shift in investor demand. The structure did not create the demand; it scaled and operationalized it.

Tokenization’s Core Parallels: Mint, Burn, and Arbitrage

At first glance, tokenization closely mirrors ETF market structure. The same core mechanisms — minting and burning, arbitrage, and a liquid wrapper around underlying assets — are present.

However, two structural differences are critical. First, tokenized assets trade across fragmented venues rather than a single exchange. Second, arbitrage depends on the ability to move assets seamlessly across systems. These factors determine whether price convergence and liquidity actually hold.

Minting and burning = creation and redemption: A robust tokenized asset isn’t simply “issued” once like a stock or bond – it typically can be minted or burned on demand against some pool of underlying assets or rights. This is directly analogous to ETF creation/redemption. For example, when a token represents shares of a fund or stock, authorized participants (or smart contracts acting as such) should be able to deposit the underlying and mint new tokens or redeem tokens for the underlying assets. If the token trades above the value of its underlying holdings, arbitragers will mint new tokens (injecting supply) until prices realign; if it trades below, they will redeem tokens (reducing supply) until the discount closes. The economic principle is identical to ETFs. The token is a wrapper on the same assets, and arbitrage keeps its price honest.

Multiple venues, one price: Tokenized assets can theoretically trade on decentralized exchanges, centralized crypto exchanges, or even OTC – potentially a far more fragmented venue landscape than the single exchange listing of a typical ETF. However, if the arbitrage mechanism is working and participants have access to all venues, then price discrepancies are opportunities that quickly get resolved. In fact, in an ideal setup, tokenization could unify liquidity globally: a token could trade 24/7 across platforms, but thanks to arbitrage and inter-operable settlement, it behaves as one continuous market. The flip side is also true: if there are barriers to moving between venues – say, a token on one blockchain can’t be easily transferred to another, or regulatory silos prevent arbitrage across regions – then prices can diverge and liquidity will fragment. The technology itself doesn’t guarantee unity; the market structure around it does.

Wrapper design: In both ETFs and tokenization, the wrapper is simply a liquid representation of a basket of economic exposures. An ETF share is not the underlying securities themselves, but a standardized claim on a basket that trades efficiently because creation and redemption keep it aligned with the underlying assets. Tokenization follows the same logic. The token becomes the liquid instrument, while the underlying assets remain the economic anchor. What matters is not the form of the wrapper, but the strength of the arbitrage link between wrapper and basket. As with ETFs, supply must be able to expand or contract when prices deviate. That two‑way convertibility — or a functional equivalent — is what anchors value. The core lesson from ETFs applies directly to tokenization: almost any asset can be packaged into a tradable basket, but only market structure and arbitrage determine whether the wrapper works.

Integrity through transparency: ETFs already represented a major leap in transparency by making baskets of assets trade continuously on-exchange, with visible prices, intraday liquidity, and arbitrage-based alignment to underlying value. Tokenization builds on this foundation. Where blockchains can go further is in making issuance, transfers, and outstanding supply observable in near real time, potentially widening visibility into how the wrapper evolves relative to the underlying basket. As with ETFs, professional liquidity providers will remain central, but tokenization may incrementally extend the transparency ETF market structure introduced—using new rails to reinforce the same core principle: prices stay fair when arbitrage is visible, credible, and executable.

24/7 Trading and Overnight Pricing

One of the most important features of tokenized markets is their ability to trade continuously, even when underlying markets are closed. For anyone who has spent time trading ETFs globally, this is not something new, but a familiar and highly valuable market‑structure capability. Continuous trading outside local market hours allows prices to incorporate new information as it emerges, rather than waiting for the next market open, and enables investors across time zones to transfer risk when they actually need to. These prices reflect informed expectations—built using correlated instruments, futures, FX, and broader market signals—in exactly the same way international and cross‑time zone ETFs have operated for decades.

U.S.-listed ETFs that hold European or Asian equities already demonstrate how credible pricing can exist when the underlying cash market is closed. These ETFs continue to trade during the U.S. session after local markets have shut, with prices reflecting updated expectations based on futures, FX, ADRs, and other correlated signals rather than stale closing prints. In practice, market makers and authorized participants continuously estimate an intrinsic fair value, including an expected next-open for underlying assets, and quote around it.

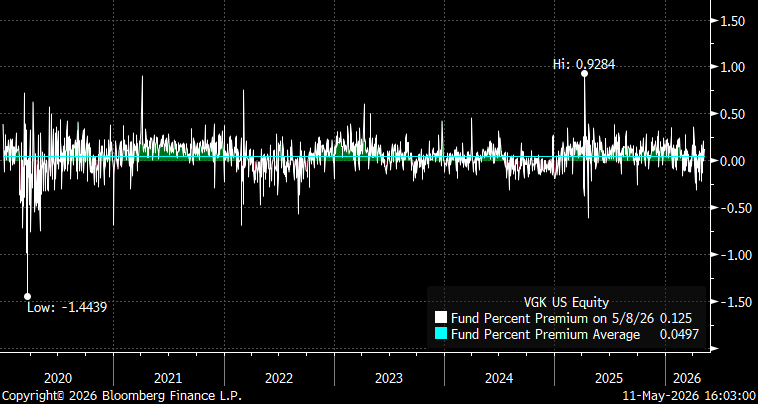

This is visible in VGK (Vanguard FTSE Europe ETF), a ~$30bn ETF tracking European equities but traded in the U.S. Despite extended off-hours trading, pricing remains tightly anchored: even during Covid, the discount only briefly exceeded -1.5%, while the average premium over time is ~5bps.

Now apply the same concept to tokenization:

A tokenized Apple stock, for instance, can trade on Saturday based on evaluation of Apple’s likely next trading price come Monday. If big news broke on Saturday, you’d see the token react immediately. Liquidity providers would quote a price factoring in that news (maybe also hedging with any related instruments like Nasdaq futures if available). By Monday’s market open, Apple’s real stock price would likely catch up to wherever the token traded over the weekend. In effect, the token becomes a price discovery leading indicator for the underlying stock.

Continuous risk transfer: Market participants (especially across different time zones) don’t all operate on U.S. Eastern Time. A European investor holding a tokenized U.S. bond fund might love the ability to adjust positions at 8pm CET on a Friday, rather than waiting until Monday. For a market maker like us, it means we can facilitate those trades and manage our inventory risk dynamically, instead of facing a gap risk when markets reopen.

Overnight funding and spreads: Yes, providing liquidity 24/7 entails some cost. We account for the “cost of carry” or risk of holding a position when underlying markets are closed. In practice this just means spreads might be wider during purely off-hour trading, just as they are for, say, currency markets on a holiday – but the key is, the market stays open. And as more participants join and risk management tools improve, these costs diminish. In the long run, a 24/7 market should become as natural as the 24/5 FX market is today.

Tokenization extending assets to 24/7 trading is an evolution of what ETFs and electronic markets already started. We are pushing the boundaries from “extended hours” to “fully continuous” markets. Every step of that journey – from floor trading to electronic trading to global 24-hour trading – has ultimately brought narrower spreads, fewer gaps, and more opportunities for risk management. Tokenization is just the next logical step on that continuum, leveraging modern tech to get us to always-on markets.

What’s In It for Institutions?

It’s impossible to get institutions (whether banks, asset managers, or market makers) to adopt a new structure unless it clearly improves their bottom line or reduces risk. Tokenization, when done right, promises several such improvements – many of which echo the advantages ETFs delivered, now taken a step further.

1. Faster Settlement = Lower Risk and Funding Cost.

Traditional securities often settle T+2 (trade date plus two days). That delay means capital is tied up to cover settlement risk (margin requirements at clearinghouses, credit lines with counterparties, etc.). Tokens, by operating on blockchain rails, can settle near-instantly or on a T+0 basis. In practical terms, that cuts counterparty risk and frees up capital that would otherwise sit idle to back unsettled trades.

2. Collateral Mobility, Reuse, and Composability.

In today’s market, using an ETF as collateral requires multiple intermediaries and operational steps, typically via prime brokers, margin frameworks, or repo markets. In a tokenized setup, this process can become significantly more direct. Assets can be pledged programmatically, released upon repayment, and reused without repeated settlement friction.

This is often described as composability: the ability for assets to interact seamlessly across different financial functions. A tokenized asset can be held, pledged, rehypothecated, or redeployed across applications without needing to be moved through separate legal and operational layers each time.

The result is higher collateral mobility and more efficient reuse. The same asset can support more activity because each transfer or re-pledge carries less friction, improving overall capital efficiency.

For institutional traders, this means doing more with less. Instead of maintaining fragmented pools of collateral across derivatives, prime brokerage, and clearing requirements, a tokenized pool could, in principle, be dynamically allocated in real time to where it is most needed.

3. 24/7 Access to Liquidity = Less Gap Risk.

This ties back to the earlier discussion: if you’re an institution holding an asset and something happens off-hours, you’re currently stuck. With tokenization offering round-the-clock markets, institutions can hedge or adjust positions at any time, reducing risk.

From an institutional adoption perspective, the rationale is straightforward: tokenization only matters if it delivers tangible gains in efficiency and risk management. Those dynamics—and why they matter for large allocators and liquidity providers—are covered in more detail in Tokenization in Capital Markets: A Market Maker’s Perspective , which looks specifically at settlement efficiency, collateral mobility, and balance‑sheet implications through a market‑structure lens.

Conclusion: Not “If” But “How”

The current tokenization dialogue closely resembles the early days of ETFs: initial skepticism, early traction in niche segments, and increasing institutional involvement. That same pattern ultimately transformed ETFs into a $10+ trillion market.

I firmly believe tokenization is on the same path, because the structural forces pushing it forward are the same ones that made ETFs successful:

Market makers and traders are ready to keep pricing fair across any venues that arise.

Technology enables a leap in speed and connectivity.

Regulators, in time, come to see the benefits and adjust frameworks accordingly.

Importantly, this isn’t about replacing one asset class with another – we’re not swapping stocks for some new “token asset”. It is about migrating financial markets onto more efficient infrastructure, asset class by asset class, while preserving the economic characteristics investors already understand.

At Flow Traders, our perspective is informed by long experience in ETF market making and the role that structure, arbitrage, and liquidity play in building resilient markets. That experience now directly informs how we approach tokenized instruments. The parallels are instructive, the differences meaningful. The practical question is no longer whether tokenization will play a role in capital markets, but how it can be implemented in a way that delivers the same outcomes ETFs ultimately achieved: deep liquidity, tight pricing, and broad institutional adoption.

Viewed through that lens, tokenization fits within the continuum of market‑structure innovation. The relevant test is not technological novelty, but whether it improves efficiency, access, and robustness at the system level. Where those conditions are met, tokenization is not merely comparable to the ETF evolution—it represents its logical continuation. As with previous structural shifts, those who engage early with its mechanics and incentives will be best positioned as the market matures.